Lightweight and Personalized Single-Eye Emotion Recognition via CNN-SNN Spatiotemporal Learning and Memory-Inferred Event Features (TCSVT 2026)

Abstract

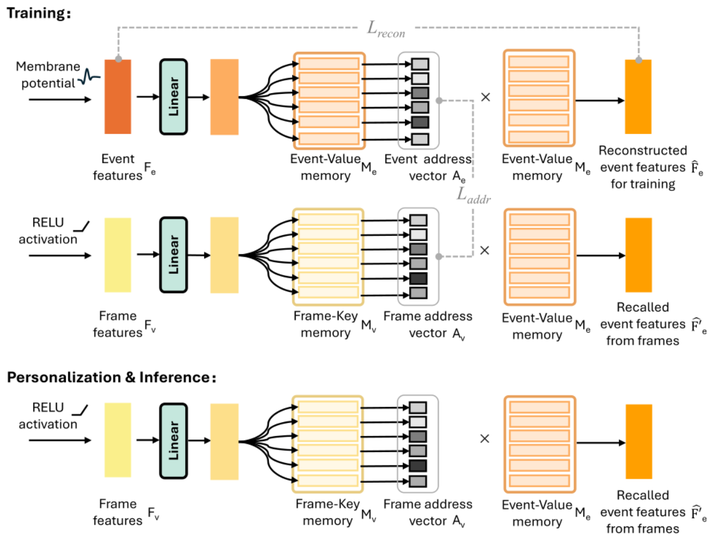

Emotion recognition is essential for improving user experience and interaction quality in human-centered applications. While recent studies have leveraged both event and traditional cameras to enhance eye-based emotion recognition, their practical deployment is hindered by the scarcity of event cameras and the complexity of dual-modality frameworks. Personalization, which is critical for handling individual differences in emotional expression, is also affected by these factors, resulting in reduced performance and adaptation efficiency. To address these challenges, we propose a lightweight and personalized single-eye emotion recognition network, called LPSEER. LPSEER introduces a novel hybrid neural architecture that integrates a convolutional neural network (CNN) and a spiking neural network (SNN) to capture spatiotemporal features from video frames and events, respectively. Additionally, we design a memory-based event feature inference (MEFI) module that recalls event features from video frames, eliminating the reliance on event cameras during inference and personalization while retaining the discriminative advantages of event-based representations. Experimental results demonstrate that LPSEER achieves state-of-the-art recognition accuracy while maintaining the smallest model size and lowest computational cost. Further experiments confirm the strong generalization capabilities and the ability to achieve faster, more accurate personalization. These advantages collectively enable lightweight, accurate, and efficient emotion recognition for real-world human-centered applications.